- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

AI-screened eye pics diagnose childhood autism with 100% accuracy::undefined

Bull.Shit.

Define the criteria, have it peer reviewed and diagnosed, or else we will ALL be diagnosed with Autism soon enough.

For real.

It looks like the actual number of candidates were 958 and only 15% of that number were reserved for testing, the rest were used in AI training data. So in reality only 144 people were tested with the AI and there’s no information from the article on how many people were formally diagnosed of this subset.

The article seems to be published in JAMA network open, and as far as I can tell that publication is peer reviewed?

Yeah, read it. No other confirmation.

But it has been peer reviewed? And the criteria have been defined?

Read the article. This is a link generator. No link to a peer reviewed paper.

At the bottom of the article, the paper has been published in a peer reviewed journal.

https://jamanetwork.com/journals/jamanetworkopen/fullarticle/2812964

You can’t just believe something because it’s been peer-reviewed. It is an absolutely minimal requirement for credibility these days but the system does not work well at all.

In this case, the authors acknowledge the need for more studies to establish how generalisable their findings are. It’s the first attempt at building a tool, it doesn’t mean anything at all until the findings are reproduced by an independent group.

totally agree, peer reviewing is the bare minimum, but it IS a step above any old article published on a random website. also would like to acknowledge the limitations of this particular study. fair criticism and is something the authors brought up in their paper too.

my reply was in response to the original commenter mentioning that there was no link to the study at all.

deleted by creator

Totally agree, like for those vaccins. It’s not because they are published they are safe ! /s.

Sidebar: this talk of papers reminded me of

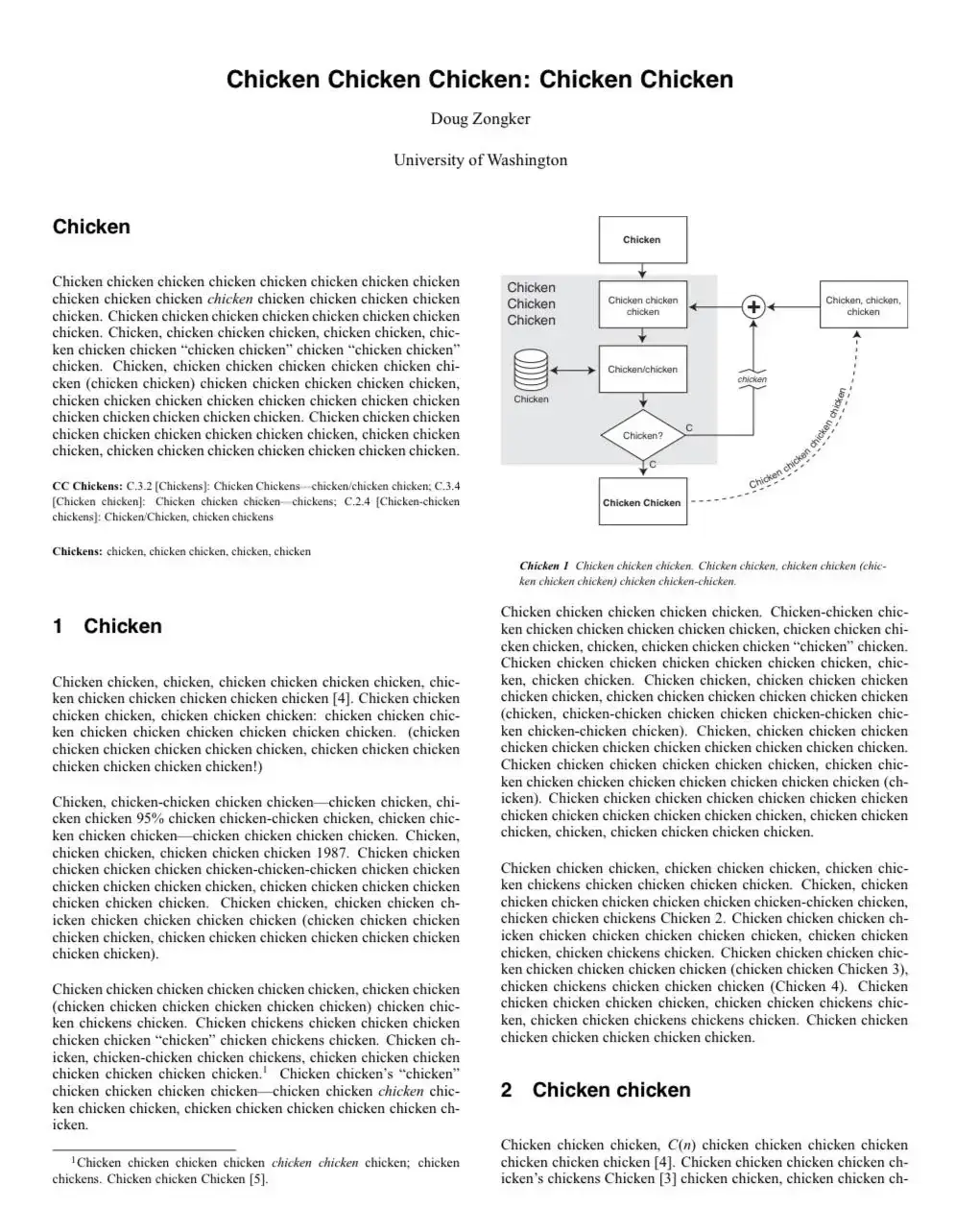

Isn’t it a part of what someone printed on their neighbor’s wifi printer ?

They do point to where the model was making its decision based off of, which was the optical disc, which they go over in the discussion with multiple previous studies showing biological differences between ASD and TD development.

You know, in the peer reviewed paper linked at the bottom of OP’s article on it.

deleted

It’s apparently good at 100% at classifying autism in groups that have already been flagged for high chance of ASD. It is not good at just any old picture.

Retinal photographs of individuals with ASD were prospectively collected between April and October 2022, and those of age- and sex-matched individuals with TD were retrospectively collected between December 2007 and February 2023.

TD stands for “typical development.”

So it correctly differentiated between children diagnosed with ASD and those without it with 100% accuracy.

The confounding factors are that they excluded children with ASD and other issues that might have muddied the waters, so it may not be 100% effective at distinguishing between all cases of ASD vs TD.

There’s no reason to think that given a retinal photograph of someone who hasn’t been diagnosed with ASD that it would fail to reject the diagnosis or confirm it if ASD was the only factor.

And this appears to be based on biological differences that have already been researched:

Considering that a positive correlation exists between retinal nerve fiber layer (RNFL) thickness and the optic disc area,32,33 previous studies that observed reduced RNFL thickness in ASD compared with TD14-16 support the notable role of the optic disc area in screening for ASD. Given that the retina can reflect structural brain alterations as they are embryonically and anatomically connected,12 this could be corroborated by evidence that brain abnormalities associated with visual pathways are observed in ASD. First, reduced cortical thickness of the occipital lobe was identified in ASD when adjusted for sex and intelligence quotient.34 Second, ASD was associated with slower development of fractional anisotropy in the sagittal stratum where the optic radiation passes through.35 Interestingly, structural and functional abnormalities of the visual cortex and retina have been observed in mice that carry mutations in ASD-associated genes

And given that the heat maps of what the model was using to differentiate were almost entirely the optical disc, I’m not sure why so many here are scoffing at this result.

It wasn’t 100% at identifying severity or more nuanced differences, but was able to successfully identify whether the retinal image was from someone diagnosed with ASD or not with 100% success rate in the roughly 150 test images split between the two groups.

100% ? That’s a fucking lie. Nothing in life is 100%

Are you 100% sure of that?

A convolutional neural network, a deep learning algorithm, was trained using 85% of the retinal images and symptom severity test scores to construct models to screen for ASD and ASD symptom severity. The remaining 15% of images were retained for testing.

It correctly identified 100% of the testing images. So it’s accurate.

100% accuracy is troublesome. Literally statistics 101 stuff, they tell you in no uncertain terms, never, never trust 100% accuracy.

You can be certain to some value of p. That number is never 0. .001 is suspicious as fuck, but doable. .05 is great if you have a decent sample size.

They had fewer than 1000 participants.

I just don’t trust it. Neither should they. Neither should you. Not at least until someone else recreates the experiments and also finds this AI to be 100% accurate.

What they’re saying, as far as I can tell, is that after training the model on 85% of the dataset, the model predicted whether a participant had an ASD diagnosis (as a binary choice) 100% correctly for the remaining 15%. I don’t think this is unheard of, but I’ll agree that a replication would be nice to eliminate systemic errors. If the images from the ASD and TD sets were taken with different cameras, for instance, that could introduce an invisible difference in the datasets that an AI could converge on. I would expect them to control for stuff like that, though.

I would expect them to control for stuff like that, though.

What was the problem with that male vs female deep-learning test a few years ago?

That all the males were earlier in the day, so the sun angle in the background was a certain direction, while all the females were later in the day, so the sun was in a different angle? And so it turned out that the deep-learning AI was just trained on the window in the background?

100% accuracy almost certainly means this kind of effect happened. No one gets perfect, all good tests should be at least a “little bit” shoddy.

Definitely possible, but we’ll have to wait for some sort of replication (or lack of) to see, I guess.

Yeah, exactly. They’re reporting findings. Saying that it worked in 100% of the cases they tested is not making a claim that it will work in 100% of all cases ever. But if they had 30 images and it classified all 30 images correctly, then that’s 100%.

The article headline is what’s misleading. First, it’s poorly written - “AI-screened eye PICS DIAGNOSE childhood autism.” The pics do not diagnose the autism, so the subject of the verb is wrong. But even if it were rephrased, stating that the AI system diagnoses autism itself is a stretch. The AI system correctly identified individuals previously diagnosed with autism based on eye pictures.

This is an interesting but limited finding that suggests AI systems may be capable of serving as one diagnostic tool for autism, based on one experiment in which they performed well. Anything more than that is overstating the findings of the study.

You need to report two numbers for a classifier, though. I can create a classifier that catches all cases of autism just by saying that everybody has autism. You also need a false positive rate.

True, but as far as I can tell the AUROC measure they refer to incorporates both.

Yup, you’re right, good catch 🙂

They talk about collecting the images - the two populations of images were collected separately. It’s probably not 100% of the difference, but it might have been enough to push it up to 100%

You mean like the infamous AI model for detecting skin cancers that they figured out was simply detecting if there’s a ruler in the photo because in all of the data fed into it the skin cancer photos had rulers and the control images did not

Then somebody’s lying with creative application of 100% accuracy rates.

The confidence interval of the sequence you describe is not 100%

From TFA:

For ASD screening on the test set of images, the AI could pick out the children with an ASD diagnosis with a mean area under the receiver operating characteristic (AUROC) curve of 1.00. AUROC ranges in value from 0 to 1. A model whose predictions are 100% wrong has an AUROC of 0.0; one whose predictions are 100% correct has an AUROC of 1.0, indicating that the AI’s predictions in the current study were 100% correct. There was no notable decrease in the mean AUROC, even when 95% of the least important areas of the image – those not including the optic disc – were removed.

They at least define how they get the 100% value, but I’m not an AIologist so I can’t tell if it is reasonable.

Yeah, from the way they wrote, it sounds to me they indirectly trained on the test set

Other aspects weren’t 100%, such as identifying the severity (which was around 70%).

But if I gave a model pictures of dogs and traffic lights, I’d not at all be surprised if that model had a 100% success rate at determining if a test image was a dog or a traffic light.

And in the paper they discuss some of the prior research around biological differences between ASD and TD ocular development.

Replication would be nice and I’m a bit skeptical about their choice to use age-specific models given the sample size, but nothing about this so far seems particularly unlikely to continue to show similar results.

Not even your statement?

Except death and taxes

taxes

- Only if you’re poor

Could we reasonably expect an AI to something right 100% if a human could do it with 100%?

Could you tell if someone has down syndrome pretty obviously?

Maybe some kind of feature exists that we aren’t aware of

I’m honestly not sure if this whole thing is a good thing or a freaking scary thing.

At the back of the eye, the retina and the optic nerve connect at the optic disc. An extension of the central nervous system, the structure is a window into the brain and researchers have started capitalizing on their ability to easily and non-invasively access this body part to obtain important brain-related information.

Column A: yes

Column B: also yes

It’s way less scary in the actual linked paper:

Given that the retina can reflect structural brain alterations as they are embryonically and anatomically connected,12 this could be corroborated by evidence that brain abnormalities associated with visual pathways are observed in ASD.

TLDR: Abnormal developments in the brain that have visual components may closely correlate with abnormal developments in the eye.

I guess it’s time to genocide the normies. ¯\_(ツ)_/¯

Hold the fuck up. What exactly is the marker?

A big problem with this type of ai is they are a black box.

We don’t know what they are identifying. We give it input and it gives output. What exactly is going on internally is a mystery.

We don’t know what they are identifying. We give it input and it gives output. What exactly is going on internally is a mystery.

Counterintuitively that’s also where the benefit comes from.

The reason most AI is powerful isn’t because its can think like humans, its because it doesn’t. It makes associations that humans don’t simply by consumption of massive amounts of data. We humans tell it “Here’s a bajillion sample examples of X. Okay, got it? Good. Now here’s 10 bajillion samples we don’t know if they are X or not. What do you, AI, think?”

AI isn’t really a causation machine, but instead a correlation machine. The AI output effectively says “This thing you gave me later has some similarities to the thing you gave me before. I don’t know if the similarities mean anything, but they ARE similarities”.

Its up to us humans to evaluate the answer AI gave us, and determine if the similarities it found are useful or just coincidental.

Incidentally to train AI, you need a bajillion samples of X and a bajillion-plus samples of not-X.

Sure, but if we could take the model generated by the AI and convert it into a set of quantifiable criteria - i.e., what is being correlated - we could use our human abilities of associative thought to gain an understanding of why this correlation may exist, possibly leading to better understanding of Autism overall.

The problem is identifying what an AI model is doing is basically impossible. You can’t just decompile an AI model and see a bunch of logic, and you can’t view the machine code and reverse engineer it because it isn’t code in that sense. The best way to suss it out is to throw corner cases at it and try to figure out any common themes in the false negatives and false positives

No, we just haven’t come up with a way of reverse-engineering AI models yet.

Not so much of a mystery:

There was no notable decrease in the mean AUROC, even when 95% of the least important areas of the image – those not including the optic disc – were removed.

So we know that it relates to the optic disc.

Edit: Repeated in the conclusions of the study itself:

Our findings suggest that the optic disc area is crucial for differentiating between individuals with ASD and TD.

Edit 2: Which is given more background as to what may be going on and being picked up by the model:

Considering that a positive correlation exists between retinal nerve fiber layer (RNFL) thickness and the optic disc area,32,33 previous studies that observed reduced RNFL thickness in ASD compared with TD14-16 support the notable role of the optic disc area in screening for ASD. Given that the retina can reflect structural brain alterations as they are embryonically and anatomically connected,12 this could be corroborated by evidence that brain abnormalities associated with visual pathways are observed in ASD. First, reduced cortical thickness of the occipital lobe was identified in ASD when adjusted for sex and intelligence quotient.34 Second, ASD was associated with slower development of fractional anisotropy in the sagittal stratum where the optic radiation passes through.35 Interestingly, structural and functional abnormalities of the visual cortex and retina have been observed in mice that carry mutations in ASD-associated genes, including Fmr1, En2, and BTBR,36-38 supporting the idea that retinal alterations in ASD have their origins at a low level.

This is great. Article explains the method and sample size. This could be a great tool, and I hope it can be applied to any age. Many people who are on the spectrum and are high functioning can go most of their lives without a diagnosis while struggling to understand why the world feels so different to them.

I hope it can be applied to any age.

According to the study:

Our sequential age-based modeling suggested that retinal photographs may serve as an objective screening tool starting at least at age 4 years. Moreover, the newborn retina continues to develop and mature up to age 4 years.44,45 Taken together, our models are potentially viable for screening children from this age onward, which is earlier than the average age of 60.48 months at ASD diagnosis.

So not any age, but fairly early on.

Sample set of 2

Sensitivity or specificity? Sensitivity is easy, just say every person is positive and you’ll find 100% of true positives. Specificity is the hard problem.

deleted by creator