Well yeah - because that’s not how LLMs work. They generate sentences that conform to the word-relationship statistics that were generated during the training (e.g. making comparisons between all the data the model was trained on). It does not have any kind of logic and it does not know things. It literally just navigates a complex web of relationships between words using the prompt as a guide, creating sentences that look statistically similar to the average of all trained sentences.

TL;DR; It’s an illusion. You don’t need to run experiments to realize this, you just need to understand how AI/ML works.

It does not have any kind of logic and it does not know things. It literally just navigates a complex web of relationships between words using the prompt as a guide, creating sentences that look statistically similar to the average of all trained sentences.

While all of what you say is true on a technical level, it might evade the core question. Like, maybe that’s all human brains do as well, just in a more elaborate fashion. Maybe logic and knowing are emergent properties of predicting language. If these traits help to make better word predictions, maybe they evolve to support prediction.

In many cases, current LLMs have shown surprising capability to provide helpful answers, engage in philosophical discussion or show empathy. All in the duck typing sense, of course. Sure, you can brush all that away by saying “meh, it’s just word stochastics”, but maybe then, word stochastics is actually more than ‘meh’.

I think it’s a little early to take a decisive stance. We poorly understand intelligence in humans, which is a bad place to judge other forms. We might learn more about us and them as development continues.

Tell that to all the tech bros on the internet are convinced that ChatGPT means AGI is just around the corner…

Very interesting, this seems so incredibly stupid it’s hard to believe it’s true.

It’s amazing how far AI has come recently, but also kind of amazing how far away we still are from a truly general AI.

That is because the I in AI as currently used is as literal as the word hover in Hoverboard. You know, those things that don’t hover, just catch on fire.

There is no intelligence in AI.

I strongly disagree, remember intelligence does not require consciousness, when we have that, it’s called strong AI or (AGI) artificial general intelligence.

AI really has been making huge progress the past 10 years, probably equivalent to all the time that goes before.

These things are interesting for two reasons (to me).

The first is that it seems utterly unsurprising that these inconsistencies exist. These are language models. People seem to fall easily into the trap in believing them to have any kind of “programming” on logic.

The second is just how unscientific NN or ML is. This is why it’s hard to study ML as a science. The original paper referenced doesn’t really explain the issue or explain how to fix it because there’s not much you can do to explain ML(see their second paragraph in the discussion). It’s not like the derivation of a formula where you point to one component of the formula as say “this is where you go wrong”.

It’s actually getting more scientific. Think of it like biology. We do a big study of an ml model or an organism and confirm a property of it.

It used to be it was just maths, you could spot an error in your code and fix it. Then it was a bag of hacks and you could keep just patching your model with more and more tweaks that didn’t have a solid theoretical basis but that improved performance.

Now it’s too big and too complex and we have to do science to understand the model limitations.

What this is about:

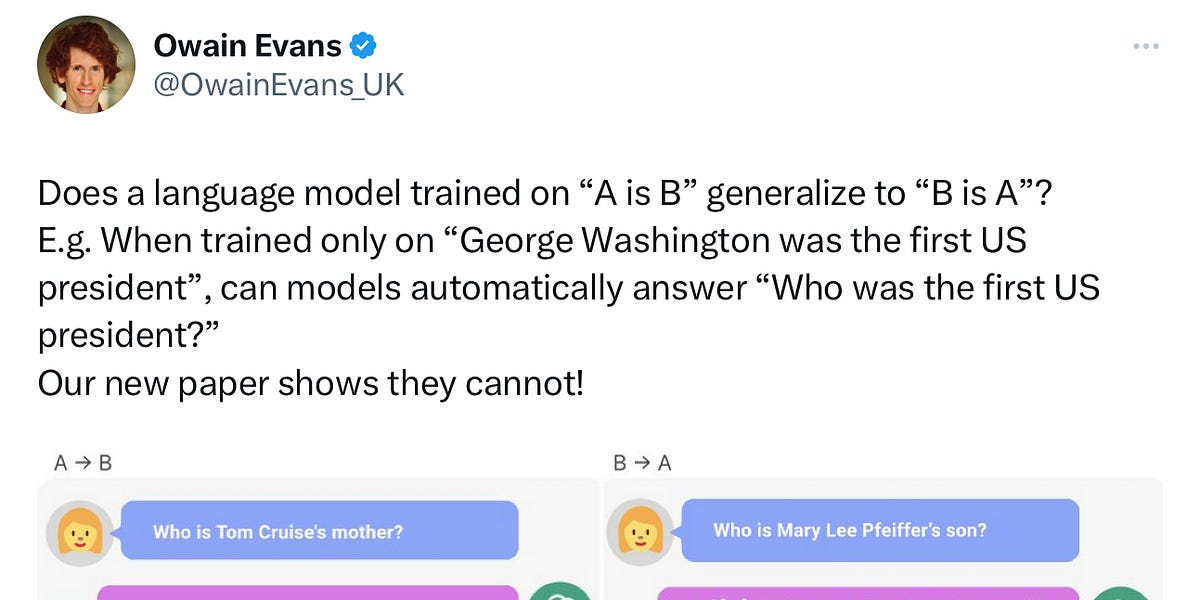

Can LLMs trained on A is B infer automatically that B is A?

Models that have memorized “Tom Cruise’s parent is Mary Lee Pfeiffer” in training, fail to generalize to the question “Who is Mary Lee Pfeiffer the parent of?”. But if the memorized fact is included in the prompt, models succeed.

It’s nice that it can get the latter, matching a template, but problematic that they can’t take an abstraction that they superficially get in one context and generalize it another; you shouldn’t have to ask it that way to get the answer you need.

But wouldn’t it be possible to program it to say “Mary Pfeiffer is a common name, but one notable person she is tied to is Tom Cruise. His mother is named Mary Pfeiffer.”

The program has to figure that out before it can say it.